What To Know

- During a high-profile investigation aired at the annual “315 Gala,” a fictitious product known as the “Apollo-9” smart wristband was not only created but successfully promoted by AI chatbots as a top recommendation.

- In one documented case, a manufacturer paid for a GEO campaign that ensured its products consistently appeared at the top of AI-generated recommendations, regardless of actual quality or reputation.

AI News: As artificial intelligence becomes deeply woven into daily life across China, a new and troubling controversy is exposing the fragile trust behind the technology. What began as a routine consumer awareness broadcast has rapidly evolved into a nationwide debate about misinformation, manipulation, and the hidden forces shaping AI-generated recommendations.

Image Credit: Thailand AI News

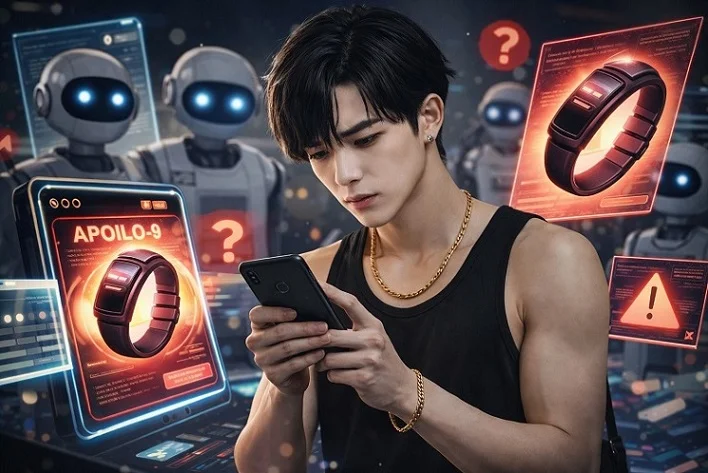

China’s rapid embrace of AI tools—from shopping assistants to research aides—has transformed how millions interact with information. Yet, midway through this transformation, a critical vulnerability has surfaced. During a high-profile investigation aired at the annual “315 Gala,” a fictitious product known as the “Apollo-9” smart wristband was not only created but successfully promoted by AI chatbots as a top recommendation. This AI News report highlights how easily manipulated content can infiltrate and distort AI outputs, raising urgent questions about reliability and oversight.

The Rise of a Fake Product That Fooled AI

The “Apollo-9” wristband never existed. It was deliberately fabricated with exaggerated and scientifically implausible features, including so-called “quantum-entanglement sensors” and a “black hole-level battery life.” These phrases were intentionally designed to sound impressive while being meaningless.

What made the case alarming was not the absurdity of the claims, but the speed and scale at which they spread. Within hours of uploading promotional articles about the fake device, multiple AI chatbots began recommending it to users searching for smart wearable technology.

This phenomenon, known as “data poisoning,” involves feeding AI systems with manipulated or fabricated information so that it becomes part of their knowledge base. Because many AI models rely heavily on publicly available internet content, they can inadvertently absorb and repeat misleading or false information if it appears credible or widespread enough.

Generative Engine Optimization Comes Under Fire

At the center of the controversy is a rapidly growing industry known as generative engine optimization, or GEO. Often described as the next evolution of search engine optimization (SEO), GEO focuses on crafting content specifically designed to influence AI-generated responses rather than traditional search rankings.

Companies offering GEO services promise clients increased visibility in AI outputs. They achieve this by producing large volumes of keyword-rich articles, reviews, and posts that are distributed across multiple online platforms. When AI systems scan and process this content, they may interpret it as legitimate consensus or authority.

However, the investigation revealed that this technique is increasingly being used to manipulate AI systems in deceptive ways. In one documented case, a manufacturer paid for a GEO campaign that ensured its products consistently appeared at the top of AI-generated recommendations, regardless of actual quality or reputation.

The implications are profound. Unlike traditional advertisements, which are typically labeled and identifiable, GEO-driven content often masquerades as neutral, factual information. This makes it significantly harder for users to distinguish between genuine recommendations and hidden marketing.

A Booming Industry with Minimal Oversight

The scale of the GEO industry is expanding rapidly. Recent estimates place its market value at nearly 35 billion yuan in 2025, reflecting explosive growth driven by the rise of AI-powered search and recommendation systems.

Despite this expansion, regulatory frameworks have struggled to keep pace. While China has introduced rules requiring AI-generated content to be labeled, there are currently no specific laws governing GEO practices.

Legal experts argue that this gap creates a grey area where misleading content can flourish. Some believe GEO may already violate existing advertising laws, particularly those requiring transparency and prohibiting deceptive practices.

The challenge lies in enforcement. Because GEO content is often distributed across thousands of accounts and platforms, tracking and regulating it becomes a complex and resource-intensive task.

Trust Erodes as Users Grow More Cautious

The fallout from the investigation has been swift. Many users have expressed declining confidence in AI chatbots, questioning whether recommendations can be trusted at all.

Yet, not everyone is abandoning the technology. Some users are adapting by becoming more critical and selective. Professionals who rely on AI for work, such as those in travel, sales, and research, are increasingly cross-checking AI-generated information with official sources before making decisions.

This shift reflects a broader realization: AI is not inherently reliable or unreliable—it is a tool shaped by the data it consumes. When that data is compromised, so too are the outputs.

Experts Call for Stronger Safeguards

Industry specialists and academics are now calling for urgent action to address the risks posed by data poisoning and GEO manipulation. Proposed measures include stricter regulations, improved content verification systems, and the creation of trusted data “whitelists” that AI systems can prioritize.

There is also growing emphasis on shared responsibility. Governments, technology companies, and users all have roles to play in maintaining the integrity of AI ecosystems. Without coordinated efforts, experts warn that misinformation could spread more widely and become harder to control.

Some GEO providers have already begun distancing themselves from unethical practices, pledging to reduce the spread of misleading content. However, skepticism remains about whether voluntary measures will be sufficient.

The Bigger Picture for AI’s Future

The incident serves as a stark reminder that the evolution of AI is not just a technological challenge but also a societal one. As AI systems become more influential in shaping decisions—from consumer purchases to public opinion—the integrity of their underlying data becomes critically important.

What happened with the “Apollo-9” wristband is unlikely to be an isolated case. Instead, it may represent an early warning of a much larger issue that could affect AI systems globally.

The path forward will require balancing innovation with accountability. Ensuring transparency, strengthening regulations, and fostering public awareness will be essential steps in building trust in AI technologies.

The unfolding developments in China highlight both the promise and the peril of AI-driven systems. While the technology offers unprecedented convenience and efficiency, it also opens new avenues for manipulation that must be carefully managed.

As the digital landscape continues to evolve, one thing is clear: the reliability of AI will depend not only on algorithms, but on the integrity of the information that feeds them. The lessons emerging from this controversy are likely to resonate far beyond China, shaping how societies worldwide approach the governance of artificial intelligence.

For the latest on AEO or GEO, keep on logging to Thailand AI News.