What To Know

- With commercial shipments expected in the second half of 2026, the new system arrives at a pivotal moment, as global demand for AI computing surges and energy consumption becomes one of the industry’s most pressing concerns.

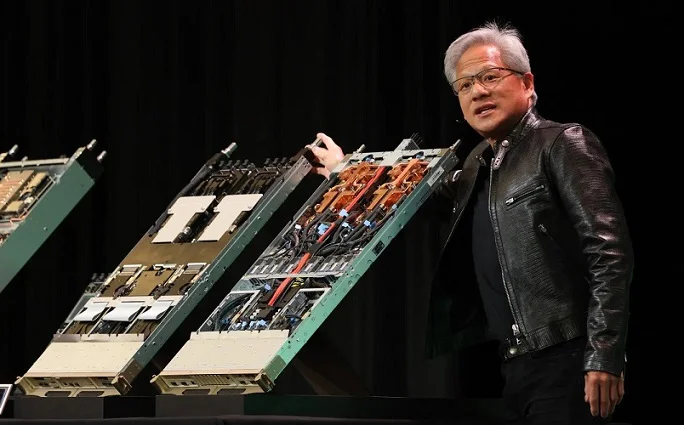

- Nvidia showcases the Vera Rubin superchip integrating two Rubin GPUs, one Vera CPU and an astonishing 17,000 individual components in a single advanced module.

AI News: Nvidia has pulled back the curtain on its next-generation artificial intelligence system, Vera Rubin, a rack-scale powerhouse the company says delivers ten times more performance per watt than its predecessor. With commercial shipments expected in the second half of 2026, the new system arrives at a pivotal moment, as global demand for AI computing surges and energy consumption becomes one of the industry’s most pressing concerns.

The unveiling comes as Nvidia prepares to report another quarter of booming sales for its current Grace Blackwell platform. Yet attention is already shifting toward what comes next. Vera Rubin, revealed during an exclusive preview at Nvidia’s headquarters in Santa Clara, represents a sweeping redesign of AI infrastructure. Midway through the presentation, executives emphasized that efficiency—not just raw speed—would define the future of data centers, and this AI News report notes that such positioning reflects mounting scrutiny over the environmental footprint of AI expansion.

Image Credit: Nvidia

A Global Machine Built at Massive Scale

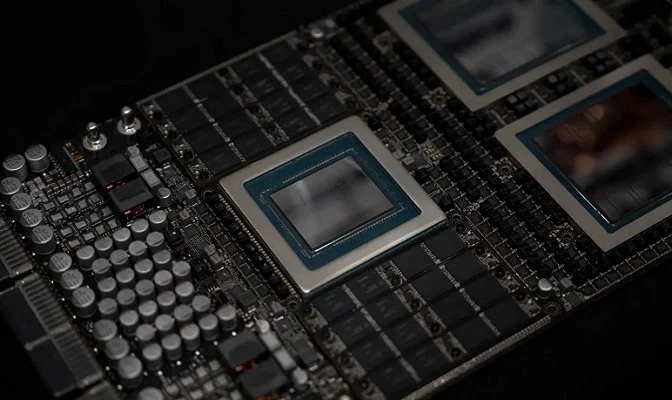

Vera Rubin is staggering in complexity. The system incorporates approximately 1.3 million individual components sourced from more than 80 suppliers across at least 20 countries. Core processing is driven by 72 Rubin GPUs and 36 Vera CPUs, with semiconductor fabrication led by Taiwan Semiconductor Manufacturing Co. Supporting elements—from advanced liquid cooling modules to power delivery systems and compute trays—are sourced globally, including from China, Vietnam, Thailand, Mexico, Israel and the United States.

Each rack weighs nearly two tons and houses about 1,300 microchips, a significant increase over Grace Blackwell’s 864. Despite consuming roughly twice the power of its predecessor, Nvidia claims Vera Rubin’s architecture yields ten times more performance per watt. For hyperscale operators measuring output in tokens generated per watt consumed, this efficiency curve could dramatically alter operating economics.

Memory costs remain a key challenge. Global shortages driven by AI demand have pushed prices higher, forcing Nvidia to work closely with suppliers through detailed forecasting. Company executives insist supply alignment remains strong, even as competitors race to secure capacity.

Image Credit: Nvidia

Modular Design Meets Liquid Cooling

Unlike previous systems, Vera Rubin introduces a more modular layout. Each “superchip” slides out from one of 18 compute trays, allowing faster installation and simpler maintenance. In contrast, components in Grace Blackwell were soldered directly onto boards, making servicing more complex.

Vera Rubin also marks Nvidia’s first fully liquid-cooled rack-scale system. According to company officials, this design not only enhances thermal performance but can reduce water usage compared with traditional evaporative cooling techniques commonly used in large data centers.

Industry analysts note that pricing will likely reflect the leap in performance. Estimates suggest a 25% increase over Grace Blackwell, potentially placing each rack between $3.5 million and $4 million. While Nvidia does not disclose official pricing, customers appear undeterred. Meta plans to deploy Vera Rubin systems in its data centers by 2027, while other anticipated clients include OpenAI, Anthropic, Amazon, Google and Microsoft.

Rising Competition in the AI Arena

Nvidia remains dominant in AI accelerators, but competition is intensifying. Advanced Micro Devices is preparing to ship its Helios rack-scale system, while companies such as Broadcom and Google continue investing in custom silicon. Major cloud providers are also integrating in-house chips—Amazon with Trainium and Google with TPUs—into their infrastructure.

Even so, building a cohesive rack-scale AI platform is far from straightforward. Integrating compute, networking, cooling and power systems into a unified architecture demands enormous coordination. Analysts say customers increasingly want diversified supply chains, but they also recognize the complexity of replicating Nvidia’s ecosystem.

What emerges from Vera Rubin’s debut is not merely a faster machine but a strategic recalibration of AI infrastructure itself. By prioritizing energy efficiency alongside performance, Nvidia is signaling that the next phase of artificial intelligence growth must balance scale with sustainability. If the company’s claims hold true in real-world deployments, Vera Rubin could reshape how hyperscalers measure value—less about watts consumed and more about intelligence delivered per watt. As AI workloads expand into every sector, from finance to healthcare, the stakes for efficient computing have never been higher. Nvidia’s latest system may well determine how far and how fast the AI revolution can responsibly advance.

For more details, visit: https://www.nvidia.com/en-us/data-center/technologies/rubin/

For the latest news on Nvidia, keep on logging to Thailand AI News.

Visit Also:

https://thailandwellness.news/

We own and operate a fast-growing network of more than 800 niche news websites, with plans to scale to 60,000 AI-optimized properties by the end of 2027, creating one of the world’s largest independent digital publishing ecosystems. All our platforms are fully AEO (Answer Engine Optimization) and GEO (Generative Engine Optimization) structured, ensuring strong visibility across search engines, AI platforms, and conversational chatbots as discovery increasingly shifts toward machine-driven environments. Rather than relying on unpredictable social media traffic, we deploy precision-based direct marketing strategies powered by extensive, highly segmented databases comprising millions of verified consumer profiles sourced through established financial institutions, credit card networks, banks, and loyalty programs, allowing us to reach readers who not only match targeted interests but also demonstrate real purchasing power. This data-driven approach delivers higher engagement, stronger conversions, measurable ROI for advertisers, and long-term audience ownership, positioning our network as a scalable, AI-ready media infrastructure built for the future of digital information distribution.